Wireless Monitoring of a Car Driver’s Brain State on the Cloud

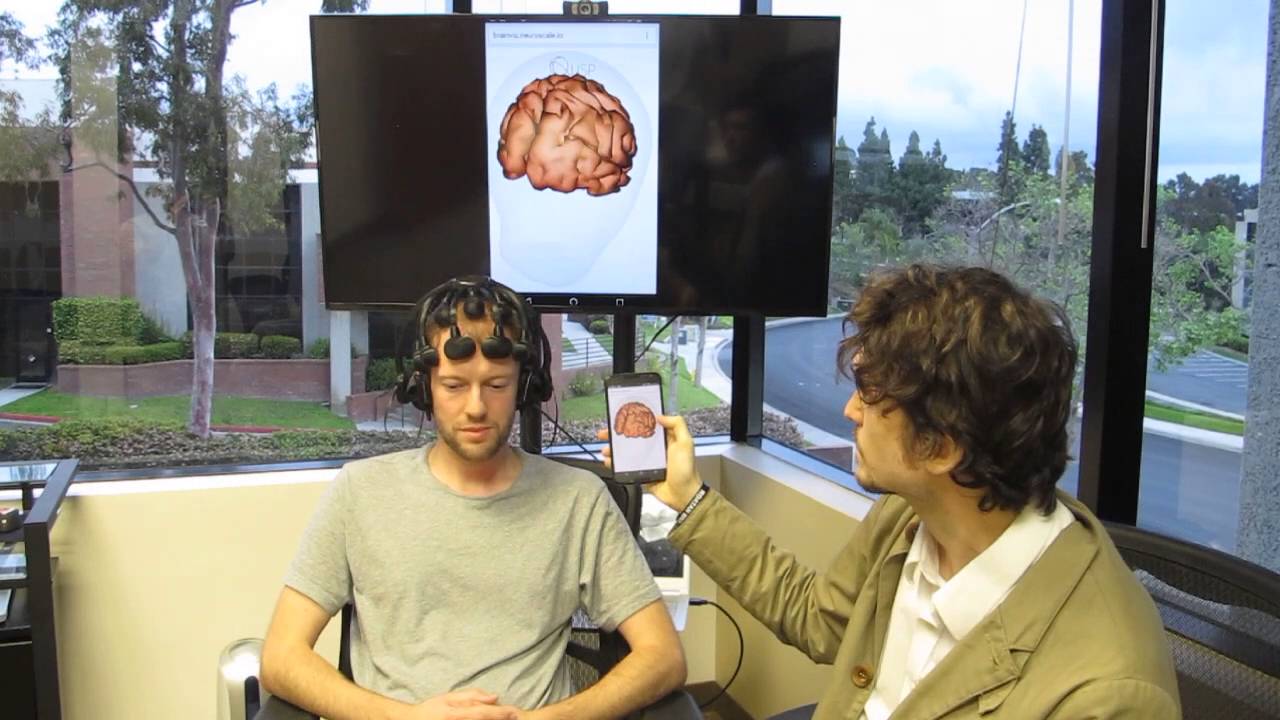

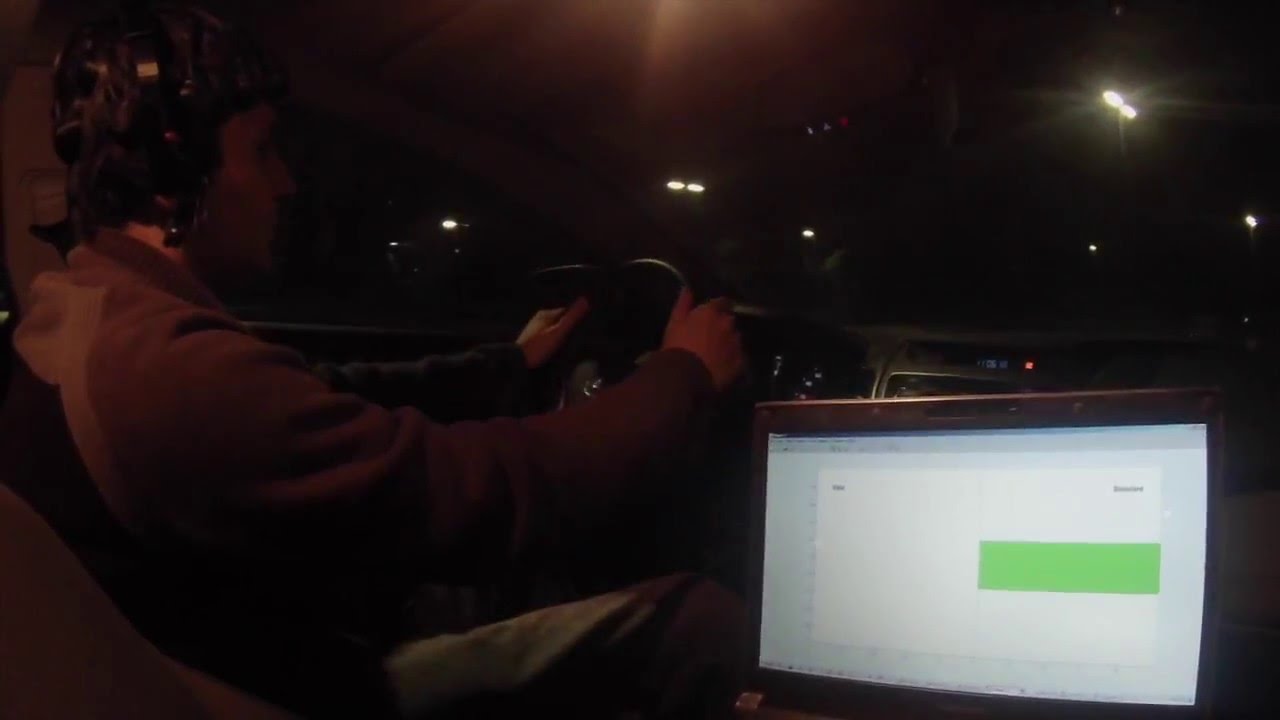

This video demonstrates a real-time wearable brain-computer interface (BCI) capable of detecting whether a car driver (here, Intheon CDO Nima Bigdely Shamlo) heard an unusual sound interspersed amongst regular sounds played over the car speakers. The is the first demonstration of a real-time, dry wireless EEG BCI system operating in a moving vehicle with real-time computation on the cloud (NeuroScale).

Detailed description:

The demonstration implements a variant of the “Auditory Oddball Paradigm” (Squires et al, 1975). Here, a regular sequence of identical “standard” tones are interspersed at random with rare (15% probability) “deviant” tones with a slightly higher pitch. The car driver (our CDO, Nima Bigdely Shamlo) is instructed to keep track of the number deviant tones, while maintaining normal driving behavior. 19-channel dry EEG data (Cognionics Quick-20) is wirelessly streamed in real-time to the NeuroScale cloud service over a 4G cellular connection, where the brain’s electrical response (for instance, the N100 and P300 event-related potentials) to each tone is measured and transmitted to a machine learning model which determines the probability the subject heard a standard tone or a deviant tone. This probability is transmitted back to the car, where it is displayed on a mobile application. For reference, the visualization displays a bar whose length denotes the probability of the tone being a standard (right facing bar) or deviant (left facing bar). A green bar denotes a correct classification, while a red bar denotes an incorrect classification (note that in the real world we don’t have ground truth, and this is only used for demonstrating BCI performance). The entire round-trip processing time here is under 900ms. The BCI pipeline was implemented in NeuroPype and deployed on the cloud using the NeuroScale REST API. Sign up for the NeuroScale beta at www.neuroscale.io!

Tags: NeuroScale, Video, Auditory Oddball, BCI, Cognitive Monitoring, Driving, Dry Electrodes, Mismatch Negativity, EEG, NeuroPype